Data remains king, and the web is the largest repository of it. Thus, web scraping has become an integral instrument for businesses and researchers in their quest to conquer the vast realm of digital data.

With this simple, but powerful tech, businesses and researchers can quickly collect huge amounts of data, which can be analyzed and used in a variety of ways.

But like any other field, web scraping has its intricacies and complexities. In fact, professional web scrapers earn up to $128,000 in salary, which goes to show the value and demand for their craft.

So whether you’re planning on diving into market research — dissecting your competitors' strategies, or you’re merely looking to automate your data collection process, web scraping is your trusty sidekick.

In this guide, we discuss what web scraping is, how it is done, the risks associated with it, and the best practices for doing it well.

Let's break it down, shall we?

What is Web Scraping?

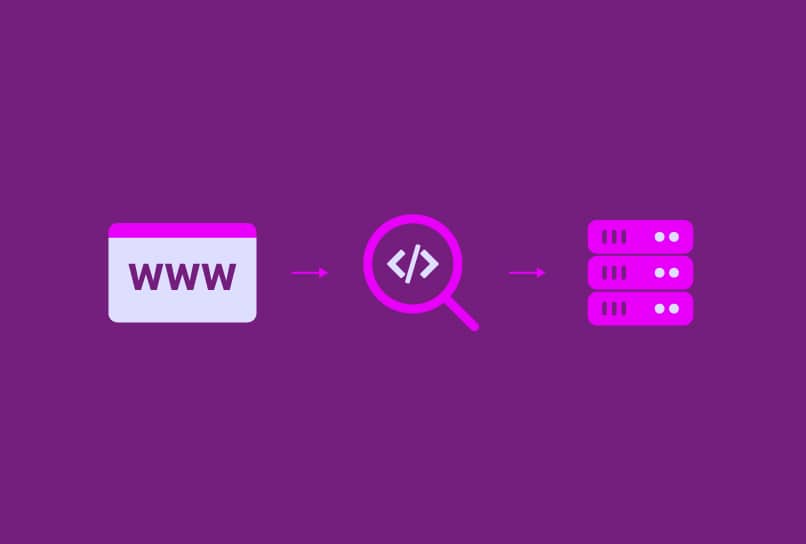

Web scraping is extracting data from websites using automated tools and scripts. The purpose of web scraping is to collect large amounts of data quickly and efficiently, which can then be analyzed and used for various purposes such as market research, competitor analysis, and content aggregation.

Web scraping can be used for a variety of applications, including:

- price monitoring,

- lead generation,

- sentiment analysis, and

- content creation.

Web scraping is a multi-step process and requires certain levels of expertise to carry out. If you are a beginner, you need to understand the basics before you attempt to carry out the process.

Understanding the Basics of Web Scraping

The World Wide Web, or the 'Web' is basically a system and convention for data aggregation. It is made up of several zettabytes of information arranged in a way it is shareable and accessible to everyone. The information is arranged in layers and the smallest/simplest layer is the web page. Several web pages make a website.

Webpages are created using HTML (Hypertext Markup Language). HTML provides a skeletal structure for the content on a web page, including text, images, and links. While HTML provides the structure of web pages, CSS (Cascading Style Sheets) adds style, in simple terms.

So basically, web scraping is all about seizing the data treasures hidden within the depths of HTML and CSS. HTML parsing is the bread and butter of web scraping; it is the process of analyzing the HTML code and identifying the elements that contain the desired data.

The process of web scraping can be done manually but it is usually done automatically using scripts and other automated tools. This is because lots of lines of HTML code are required to create a single web page and sorting through them manually takes a lot of time.

Web Scraping Techniques

Now, let's explore the different techniques web scrapers use to get their job done. We mentioned one earlier—HTML Parsing. In addition to that, there is another technique called DOM parsing. Let’s break down both of them.

A. HTML Parsing

HTML parsing involves analyzing the HTML code of a web page and identifying the elements that contain the desired data. This is done using a web scraping tool that can read and interpret HTML code.

The steps involved in HTML parsing include

- sending a request to the website,

- receiving the HTML code in response,

- parsing the HTML code to identify the relevant elements, and

- extracting the data from those elements.

B. DOM Parsing

DOM parsing involves using a web scraping tool to interact with the DOM and extract data from the web page.

HTML and CSS are not the only markup/programming languages used to format webpages; there are other languages with more capabilities. Many times more than two of these languages are used to create web pages. This makes HTML parsing ineffective for web scraping. Enters DOM parsing.

The Document Object Model (DOM) is a programming interface for HTML, XML (Extensible Markup Language), and other programming languages used to create a web page. It represents the structure of a web page as a tree of objects, which can be manipulated using JavaScript.

DOM parsing can be done using a variety of techniques, including

- using JavaScript to manipulate the DOM,

- using a headless browser to render the web page, and

- using a web scraping tool that can interact with the DOM directly.

Tools and Libraries for Web Scraping

The techniques described in the previous section require a complete suite of software programs and integration before they can be carried out. Web scrapers have access to a couple of tools and libraries that come ensuite with all the necessary tools to get the job done. Some of the popular web scraping tools and libraries include the following:

1. Selenium

Primarily for browser automation, including interacting with web pages, filling out forms, and extracting data, Selenium has been popularly adopted for web scraping and web crawling.

By leveraging Selenium's browser automation capabilities, developers can build scraping scripts that interact with dynamic websites, handle JavaScript rendering, and bypass certain anti-scraping measures.

2. Puppeteer

Puppeteer is a Node.js library that provides a high-level API for controlling headless Chrome or Chromium browsers. It can be used to automate web browsing, perform automated testing, and extract data from web pages.

3. Metapi.io

Metapi is an API-first solution designed for accessing and extracting data from Meta Ads Library without relying on traditional web scraping. Instead of parsing raw HTML, it provides structured data such as ad creatives, copy, links, and metadata in formats like JSON or CSV. This makes it especially useful for developers who need reliable and scalable data pipelines for competitive analysis, ad monitoring, or building internal tools, while avoiding the complexity of maintaining scraping scripts and handling anti-bot restrictions.

4. BeautifulSoup

BeautifulSoup is a Python library that can be used to parse HTML and XML documents. It provides a simple API for navigating and searching the DOM and can be used to extract data from web pages.

5. Scrapy

Scrapy is a Python framework for web scraping that provides a high-level API for extracting data from web pages. It can be used to crawl websites, extract data, and store it in a structured format.

Risks and Challenges in Web Scraping

There are risks and challenges associated with web scraping. Most of these challenges stem from the fact that not all website owners want you snooping around on their data. There have been cases of web scrapers who have acted in bad faith and reaped the consequences.

Also, some of these challenges are the result of the fast pace of development in the industry. Here are some of the challenges a web scrapper might face:

Website Blocking and IP Blocking

Websites may block web scraping activity by detecting and blocking the IP address of the scraper. This can be mitigated by using anti-detect browsers like Incogniton, which allow users to browse the internet anonymously and avoid detection.

Captchas and Anti-Scraping Measures

Websites may use captchas and other anti-scraping measures to prevent automated data extraction. This can be overcome by using techniques such as mimicking human browsing behavior and rotating user agent strings.

Handling Dynamic Websites and JavaScript-Rendered Content

Old websites were built with HTML and CSS and they are pretty basic compared to the ones we have today. Modern websites are built with new tools and this consequently increases their levels of sophistication.

For example, JavaScript can be used to add animated elements to a website and make it less static. Dynamic websites and JavaScript-rendered content can be difficult to scrape using traditional web scraping techniques.

These risks and challenges can be mitigated with proper preparation and taking the necessary precautions. In the next section, we discuss some practices you can incorporate into your routine that would help set up a successful web scraping session.

Best Practices for Successful Web Scraping

Here are a few practices you can implement to help get the best out of your web scraping exercises.

Identify Reliable Data Sources

The first thing to note is, web scraping is fundamentally sourcing for data and good data only comes from good sources. Therefore, it is important to identify reliable data sources when web scraping, as unreliable data can lead to inaccurate analysis and decision-making. Reliable data sources include reputable websites and data providers.

Implement Proper Scraping Etiquette

Proper scraping etiquette involves respecting the terms of service of the target website and avoiding excessive scraping. Also, using appropriate user agents and headers to aid proper identification

Use Rate Limits Handling Techniques to Avoid Disruption

Rate limits and other disruptions can be a challenge when web scraping. Use techniques such as browsing with proxies, rotating user agents, and implementing delays between requests to handle them.

Leverage the Privacy Features of Anti-Detect Browsers like Incogniton

Anti-detect browsers can be used to enhance privacy and security in web scraping activities. They allow users to browse the internet anonymously and avoid detection by websites that may be blocking or monitoring their activity.

Leveraging the privacy features of anti-detect browsers like Incogniton in web scraping activities holds significant importance, particularly in safeguarding privacy and enhancing security.

These specialized browsers empower users to navigate the online realm with anonymity, evading detection by websites that may impede or monitor their actions.

In the following section, we will delve into the details of how these anti-detect browsers play a pivotal role in ensuring the success and security of web scraping endeavors.

Using an Anti-detect Browser for Secure Web Scraping

When choosing a web scraping tool, it is important to consider factors such as ease of use, performance, and compatibility with the target website, but most importantly, anonymity, privacy, and security. However, this usually means you will be using a myriad of tools at the same time to get the job done.

The best anti-detect browsers provide web scrapers with an additional layer of privacy and security. They allow users to browse the internet anonymously and avoid detection by websites that may be blocking or monitoring their activity. This significantly mitigates the risks associated with web scraping.

And if you opt for an anti-detect browser like Incogniton, you find that it integrates all the essential web scraping libraries and tools you need, like Selenium and Puppeteer. Here are some of the benefits of using anti-detect browsers like Incogniton.

Anonymity and Privacy Protection

Anti-detect browsers are primarily privacy-centric browsers and they have specific built-in features to give users an unrivaled level of privacy. Some of these features include browser fingerprint spoofing, Canvas and WebGL disabling, and proxy integration.

Thus, web scrapers will find it easy to implement the various techniques they need to enhance anonymity and avoid detection. For instance, they can use the browser’s direct proxy integrations to carry out IP rotation, which involves changing the IP address of the web scraper to avoid detection by websites that may be blocking or monitoring their activity.

Bypassing Anti-Scraping Measures & Detection Mechanisms Employed by Websites

Anti-scraping measures such as captchas and IP blocking can be overcome by using techniques such as rotating user agents, using proxies, and implementing delays between requests. You can manage these anti-scraping measures effectively with an anti-detect browser.

Effective Session Management for Successful Scraping

Session management involves managing the state of the web scraper between requests. This can be done using cookies or by storing session data in memory. Effective session management is important for successful web scraping, as it allows the web scraper to maintain its state between requests.

Most anti-detect browsers have data storage features that enable the user to keep browsing data from each of the browsing profiles separate and easily accessible from any device.

Modifying and Managing Cookies for Efficient Data Extraction

Cookies are small text files that are stored on a user's computer by websites. They can be used to track user activity and identify web scrapers.

Cookies can also be used to store session data and maintain state between requests. Modifying and managing cookies can help to optimize data extraction by ensuring that the web scraper is authenticated and has access to the necessary data.

Incogniton has a cookie management feature that allows you to manage cookies effectively. You can delete cookies between requests or use a separate browser profile for web scraping activities.

Browser Automation

Browser automation involves using a web scraping tool to automate web browsing tasks such as clicking on links and filling out forms. This can increase efficiency and reduce the time required for web scraping. Anti-detect browsers are packed with these automation features. For example, Incogniton has a "paste as human typing" feature for filling forms automatically.

Handling JavaScript and Extracting Dynamic Content

JavaScript and dynamic content can be difficult to scrape using traditional web scraping techniques. Incogntion is integrated with tools such as Selenium and Puppeteer and so it is easy for it to interact with JavaScript and extract dynamic content.

Future Trends and Innovations in Web Scraping

It is unlikely that web scraping practices will fade away as new technologies are developed. On the contrary, these technologies will only enhance and refine the process.

For instance, the integration of AI and machine learning will make it possible to automate more complex web scraping tasks, such as extracting data from images and other content formats asides from text.

The future holds potential advancements in the form of Natural Language Processing (NLP) with the help of Vector databases and Large Language models (LLM). NLP can effectively extract data from unstructured text, including product reviews and social media posts. These potential AI capabilities promise to deliver valuable insights for businesses and researchers alike but equally pose privacy threats.

Overall, if web scraping, as we currently know it, were to eventually decline in popularity, it would likely be due to the emergence of more advanced methods for sourcing and collecting, and processing large data — a win-win situation.

Conclusion

Web scraping plays a crucial role in extracting data from websites and it is an essential tool for businesses and researchers who need to collect data from the internet.

However, to ensure responsible and ethical web scraping practices, it is essential to respect the terms of service of the target website, refrain from excessive scraping, and incorporate the best practices discussed in the article.

Through the use of anti-detect browsers like Incogniton, you can ensure privacy and security during web scraping activities. These browsers offer enhanced protection by concealing the user's identity and preventing detection while conducting web scraping tasks.

By adhering to responsible practices, web scraping can continue to serve as a valuable tool for data collection and enable businesses and researchers to gather the information they need from the vast resources available on the internet.