In e-commerce, pricing is never static. It’s fluid, reactive, and increasingly algorithm-driven. On major marketplaces and retail websites, prices can change multiple times within a single day, driven by competition, demand signals, inventory levels, and automated repricing systems.

At the same time, consumers have become more price-sensitive and informed. Studies consistently show that over 70% of online shoppers compare prices across multiple platforms before making a purchase.

This creates a simple reality: if you are not tracking the market, you are likely mispricing your products.

The growth of the web scraping software market—now valued at over $700 million—reflects how critical external data has become to pricing decisions. But within this space, two concepts are often misunderstood or used interchangeably: price scraping and price monitoring. They are related, but not the same.

This distinction matters. Choosing the wrong approach can lead to wasted resources, delayed decisions, or incomplete insights. This article breaks down both concepts clearly, explains how they differ in practice, and helps you decide which one fits your business model, technical capacity, and growth stage.

What is Price Scraping?

Price scraping is the targeted extraction of pricing data from websites.

At its core, it is a data collection process. A script, bot, or automated browser visits a product page, reads the underlying HTML (or API responses), extracts pricing information and related metadata, and exports it in a structured format such as CSV, JSON, or a database.

Common tools used for this include frameworks like Scrapy, browser automation tools like Playwright or Puppeteer, and lightweight parsing libraries such as BeautifulSoup or Cheerio.

The defining feature of price scraping is that it captures a snapshot in time.

You decide what data you need, identify the sources, and run a script to collect that information at that specific moment. The result is a dataset that reflects current pricing conditions—but only at the time of extraction.

Key Characteristics of Price Scraping:

- Project-Based: It's typically executed for a specific, defined project. For example, scraping prices for 50 key products from three main competitors before a quarterly pricing strategy meeting.

- Technical Execution: It relies on the core web scraping techniques mentioned above. Tools like BeautifulSoup (Python) or Cheerio (JavaScript) are common for simpler tasks, while Selenium or Puppeteer handle dynamic content.

- Manual Analysis: The output is usually a dataset (like a CSV or JSON file) that requires manual analysis, interpretation, and action by a pricing analyst or manager.

- Lower Frequency: Scrapes might be run daily, weekly, or even monthly, depending on the project's needs.

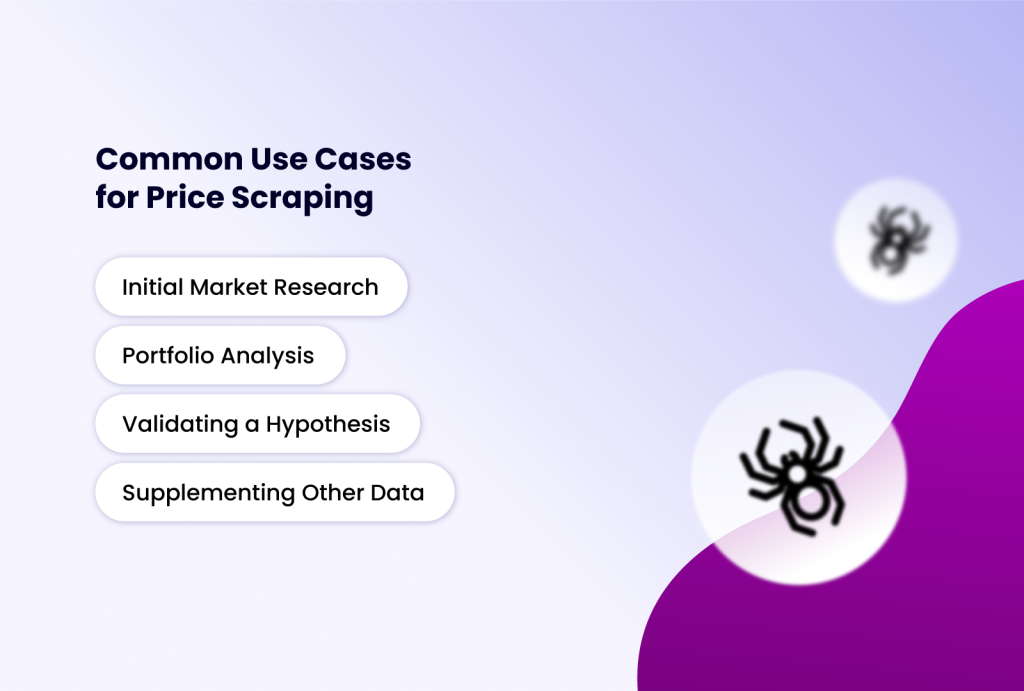

Common Use Cases for Price Scraping:

- Initial Market Research: Launching a new product and need to understand the current competitive price landscape.

- Portfolio Analysis: Conducting a one-off audit of how your entire product catalog stacks up against competitors.

- Validating a Hypothesis: Manually checking if a suspected pricing trend on a few select items is accurate.

- Supplementing Other Data: Gathering pricing data to enrich a broader market analysis report.

What is Price Monitoring?

Price monitoring is the continuous tracking of prices over time.

It builds on the same underlying mechanism as scraping, but transforms it into a persistent system. Instead of collecting a single snapshot, price monitoring repeatedly collects data at defined intervals, stores it historically, and presents it in a way that supports decision-making.

This is not just about collecting data—it’s about generating ongoing intelligence.

Most price monitoring setups rely on dedicated platforms or SaaS tools that handle infrastructure, scaling, storage, and visualization. These systems continuously track competitor prices, detect changes, and trigger alerts or automated actions.

Key Characteristics of Price Monitoring:

- Ongoing & Automated: It's a continuous process. Once set up, the system runs on autopilot, collecting data at regular, high-frequency intervals (e.g., every few hours or even minutes for volatile markets).

- Platform-Centric: It often utilizes dedicated price monitoring software or SaaS platforms. These platforms handle the scraping infrastructure, data storage, visualization, and alerting.

- Action-Oriented Insights: The focus is on trends and actionable intelligence. Dashboards show price histories, movement graphs, and competitor positioning. Alerts notify you the moment a key competitor changes their price.

- High-Frequency & Scalable: Designed to track hundreds or thousands of SKUs across dozens of sites reliably and without interruption.

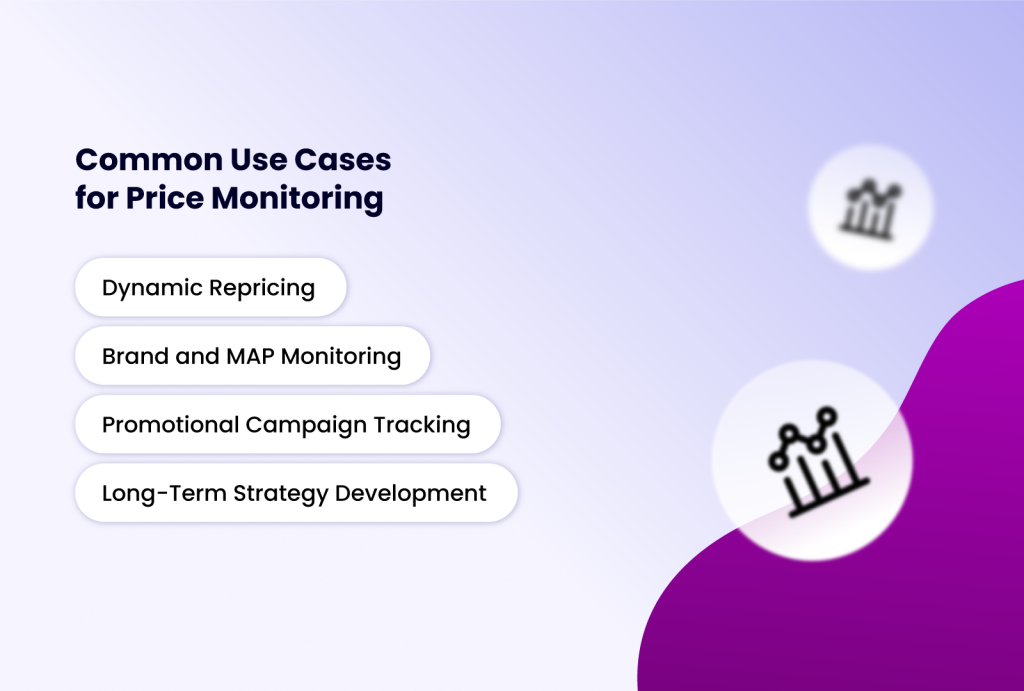

Common Use Cases for Price Monitoring:

- Dynamic Repricing: Directly integrating with e-commerce platforms (like Amazon Seller Central or Shopify apps) to automatically adjust your prices based on predefined rules relative to competitors.

- Brand and MAP (Minimum Advertised Price) Monitoring: Ensuring retail partners are complying with your pricing policies.

- Promotional Campaign Tracking: Monitoring how competitors react to your sales or promotions and tracking the effectiveness of their campaigns.

- Long-Term Strategy Development: Analyzing seasonal trends, pricing elasticity, and competitor pricing strategies over quarters or years.

Price Scraping vs Price Monitoring: A Side-by-Side Comparison

| Feature | Price Scraping | Price Monitoring |

| Core Objective | Extract a specific set of price data at a point in time. | Continuously track prices to identify trends and generate actionable insights. |

| Nature | Project-based, tactical tool. | Ongoing, strategic system. |

| Frequency | Intermittent (one-time, daily, weekly). | Continuous and high-frequency (hourly, multiple times per day). |

| Output | Raw dataset (CSV, JSON). Requires manual analysis. | Dashboards, alerts, historical charts, and often API feeds for automation. |

| Primary User | Developers, data analysts, and marketers running a specific project. | Pricing managers, e-commerce operations teams, business intelligence units. |

| Technical Overhead | High. Requires setup, script maintenance, and handling of blocks. | Low (with SaaS). The platform manages infrastructure, proxies, and anti-bot evasion. |

| Cost Structure | Lower upfront (developer time, open-source tools). | Recurring subscription fee for software/platform. |

When to Use Price Scraping vs Price Monitoring

So, which one do you need? The answer lies in your business goals, resources, and scale.

Choose Price Scraping if:

- You have a one-off or infrequent need for pricing data.

- You have in-house technical expertise (developers who can build and maintain scripts).

- Your budget is limited, and you can't justify a monthly SaaS subscription.

- You need highly customized data extraction that off-the-shelf monitoring tools can't provide.

Choose a Price Monitoring Platform if:

- Pricing is a core, ongoing component of your competitive strategy (e.g., you sell on Amazon, run an e-commerce store).

- You need to track hundreds or thousands of SKUs across multiple competitors.

- You require real-time or frequent alerts to react quickly to market changes.

- You want historical data and trend analysis without building a data warehouse.

- You lack developer resources and need a "set and forget" solution with a user-friendly dashboard.

The Hybrid Approach

In reality, many businesses do not start with full-scale monitoring. They begin with scraping.

A team might run manual scrapes to validate whether competitor pricing is volatile enough to justify continuous tracking. Once that need is clear, they transition to a monitoring system.

Some technically mature teams go further and build internal monitoring systems using a combination of scraping frameworks, proxy networks, and browser automation tools.

This is where tools like Incogniton become relevant.

Instead of relying solely on basic scripts, teams use anti-detect browsers to manage multiple sessions, avoid detection, and ensure reliable data collection across different environments. This is particularly useful when scraping sites with aggressive anti-bot protections.

The hybrid approach offers a balance:

- Control and customization from scraping

- Continuity and automation from monitoring

But it comes with complexity. If you lack engineering resources, a dedicated monitoring platform is usually the more practical choice.

Conclusion

Price scraping and price monitoring are two sides of the same data-driven coin, but they serve fundamentally different purposes in the business intelligence toolkit. Price scraping is your precision data-gathering tool—ideal for targeted, project-based insights. Price monitoring is your always-on strategic radar—essential for businesses where pricing is a continuous battleground.

For e-commerce leaders, retailers, and brands in competitive markets, the transition from sporadic scraping to systematic monitoring is often a key milestone in achieving data-driven pricing maturity. This journey requires robust technical infrastructure to overcome the significant barriers modern websites erect.

Leveraging advanced tools like Incogniton, with its anti-detect fingerprinting and built-in automation for Selenium and Puppeteer, provides the stealth and reliability necessary to execute both scraping projects and the foundation of a custom monitoring system effectively.

By clearly understanding your objectives and matching them with the appropriate technique and technology stack, you can transform raw web data into a formidable competitive advantage.